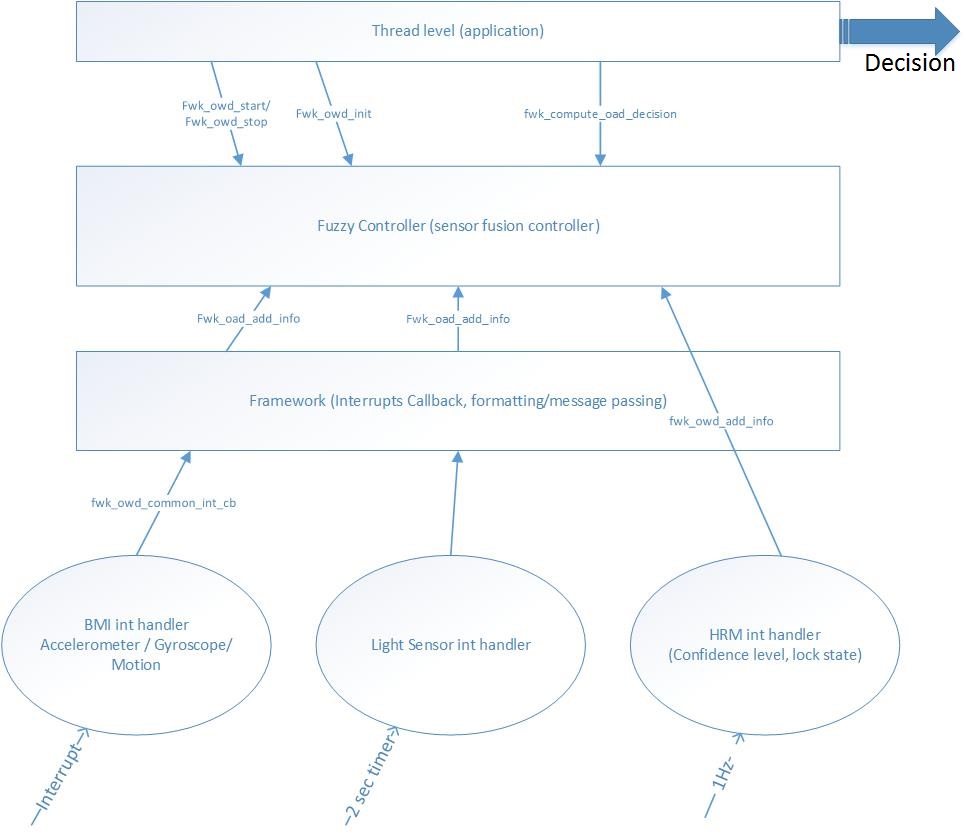

Description and architecture

In this systems, the sensors are read under interrupt, while some low-speed processes are executed under a 1 sec repetitive timer. The interrupt rates vary, from a few tens of ms (light measures) to completely arbitrary rates (motion detection) or very long periods (Heart Rate value). Hence I had to defined software components at 2 layers

- One interrupt level layer which consists in various interrupts callbacks, some very basic formatting and message sending.

- One “Framework” level (this is the Fuzzy Controller) , which basically gathers, formats and maintains the sensors information.

Then the controller is called at regular intervals (seconds) by the “Application level” layer, triggered at regular intervals (seconds) to compute the Membership functions, the Fuzzy Rules and derive a decision (defuzzification).

Data Structures

To convey information from the Interrupt level to the Framework level, the following paradigm has been chosen

- ISR (Interrupt service routine) sends a 2 bytes data structure, whose mapping is specific to the Originating sensor

- ISR calls a Framework interrupt callback by supplying the following information: Originator and Specific Bit-mapped value

// Originator of the OWD info

typedef enum { FROM_BMI_NO_MO, FROM_BMI_ANY_MO, FROM_OHRM, FROM_LS, MAX_OWD_CONTRIBUTORS } fwk_owd_from_t;

// decision type

typedef enum { ON_ARM_STATE, OFF_ARM_STATE, OWD_UNDEFINED_STATE } OWD_Decision_t;

Shared data structures are defined to hold information from the Sensor detection.

// Shared Data structures

//

// Case of BMI160 accelerometer

typedef struct fwd_owd_bmi {

union {

uint16_t val8;

struct {

uint8_t bmi_anymo_event : 1; // Any-motion

uint8_t bmi_nomo_event : 1; // no motion

uint8_t bmi_signimo_event : 1; // significant movement

uint8_t bmi_orient_fup : 1; // Orientation facing up

uint8_t bmi_orient_fdown : 1; // Orientation facing down

uint8_t bmi_orient_portrait_top : 1; // Orientation portait top

uint8_t bmi_orient_portrait_down : 1; // Orientation portait down

uint8_t bmi_orient_land_left : 1; // Orientation landscape left

uint8_t bmi_orient_lan_right : 1; // Orientation landscape right

uint8_t padding : 7;

} bits;

};

} fwk_bmi_events_t;

// Case of OHRM detector

typedef struct fwd_owd_ohrm {

union {

uint16_t val16;

struct {

uint8_t ohrm_conf_level : 8; // confidence level in %

uint8_t ohrm_locked : 1;

uint8_t padding : 7;

} bits;

};

} fwk_owd_ohrm_events_t;

// Case of ambient light sensing

typedef struct fwd_owd_light {

union {

uint16_t val16; // light sensor adc value (12 bits)

struct {

uint16_t val : 16;

} bits;

};

} fwk_owd_light_events_t;

Interfaces to the OWD Framework

As an example, the BMI NoMotion ISR would call the framework callback

void bmi160_isr_int2_handler(void)

{

// anymotion was detected

if ( .... )

{

fwk_bmi_events_t event = { 0 };

event.bits.bmi_anymo_event = 1;

fwk_owd_common_int_cb(FROM_BMI_ANY_MO, &event);

}

// nomotion was detected

if ( .... )

{

fwk_bmi_events_t event = { 0 };

event.bits.bmi_nomo_event = 1;

fwk_owd_common_int_cb(FROM_BMI_NO_MO, &event);

}

}

Similarly the light sensor reading callback would call the framework as follows

void fwk_owd_al_adc_cb(void)

{

uint16_t LsValue;

......

......

fwk_owd_add_info(FROM_LS, (void*)&LsValue); // LsValue holds the reading

.......

.......

}

Within the callback, the passed data is used to call the Controller appropriately. Depending on the sensor, this can be pure value translation, but some processing can also be done. An interesting case is the AnyMotion/NoMotion events

if (ActiveNoMotionStatus)

{

fwk_owd_add_event(FROM_BMI_NO_MO, true);

fwk_owd_add_event(FROM_BMI_ANY_MO, false);

}

else

{

fwk_owd_add_event(FROM_BMI_NO_MO, false);

fwk_owd_add_event(FROM_BMI_ANY_MO, true);

}

Notice that we had to duplicate the calls to signal both NO_MOTION and ANY_MOTION at each time. The reason lies deep into the BMI specifications and operations. Basically, if we detect “ANY_MOTION” that also means that “NO_MOTION” was NOT detected :). This sounds redundant but really isn’t to our purpose.

Fuzzy Logic Controller (Sensor Fusion Controller)

The Fuzzy logic based controller does three main things

- It aggregates the sensors provided data into proper structures

- Maintain running averages on those data

- Upon being called (fwk_compute_owd_decision)

- For each sensor computes the membership values (on the averaged values)

- computes the Inference rules

- Performs the defuzzification and derives the decision

As seen earlier, the controller has two main entry points, for sensors carrying analog information and sensors carrying digital information. Although this could have been done with a generic method, I preferred this approach for code clarity

void fwk_owd_add_info(fwk_owd_from_t orig, void *data); void fwk_owd_add_event(fwk_owd_from_t orig, bool found);

The fwk_add_event will basically fall down to the normal case after a simplistic transformation of boolean values into numerical values, as follows

#define EVENT_FOUND_NUMERICAL (100)

#define EVENT_NOTFOUND_NUMERICAL (0)

void fwk_owd_add_event(fwk_owd_from_t orig, bool found)

{

uint16_t value;

// arbitrarily normalise to 0-100 range

if (found)

{

value = EVENT_FOUND_NUMERICAL;

}

else

{

value = EVENT_NOTFOUND_NUMERICAL;

}

fwk_owd_add_info(orig, (void *)&value);

}

Here I defined a compound data structure to hold all the data received, but also the averaged values and pointers to static methods like averaging function, membership functions. It is definitely not required to do so, it is purely a matter of personal habit.

static const owd_contrib_t owdContribTable[MAX_OWD_CONTRIBUTORS] = { /* avg pAvgFunc pMFunc*/

{ &owd_data.bmiNoMo, stdAvgFunc, bmiNoMoMfArray}, /* BMI_NOMO*/

{ &owd_data.bmiAnyMo, stdAvgFunc, bmiAnyMoMfArray }, /* BMI_ANYMO*/

{ &owd_data.hrLockVal, stdAvgFunc, hrLockMfArray }, /* OHRM*/

{ &owd_data.lsValue , stdAvgFunc, lsValueMfArray } /* LS*/

};

Let us now review the main entry point fwk_add_info.

#define STD_RUNNING_AVG_DEPTH (3) /* This means 2**3 = 8 */

#define VL_RUNNING_AVG_DEPTH (8) /* HR Conf level (256 seconds) */

void fwk_owd_add_info(fwk_owd_from_t orig, void *data)

{

uint16_t val;

// Accept auto-generated 0 values, to help decreasing the avg,

// as well as real non-zero values for actual sensor values

val = *(uint16_t*)data;

// average each value (running avg)

if (orig == FROM_OHRM)

{

val = val > OHRM_CFLVL_THR ? 1 : 0;

owdContribTable[orig].avgFunc(owdContribTable[orig].avg, VL_RUNNING_AVG_DEPTH, val);

}

else

owdContribTable[orig].avgFunc(owdContribTable[orig].avg, STD_RUNNING_AVG_DEPTH, val);

// update the noMotion status

if ((orig == FROM_BMI_NO_MO) && (val == EVENT_FOUND_NUMERICAL))

ActiveNoMotionStatus = true;

else if ((orig == FROM_BMI_ANY_MO) && (val == EVENT_FOUND_NUMERICAL))

ActiveNoMotionStatus = false;

}

So for each value, we call the averaging function with different depth, depending on the sensor nature. The averaging function can be easily changed, by changing the table pointers. I opted for a standard running average

/********************************************************************

* \brief generic averaging function whatever the data. We therefore assume a

* reference to the avg os passed as parameter (as the func is generic ..)

*

* \param[in] reference to the avg

* \param[in] depth of the estimator (2**depth)

* \param[in] new value to add to the avg

* \return 0 None

*

* @remarks we use a running average .... this could be change to something else

* if needed

********************************************************************/

static void stdAvgFunc(uint16_t * const avg, int depth, uint16_t newval)

{

uint32_t temp = ((uint32_t)(*avg)) << 16; // upconvert to high precision

temp -= (temp >> depth);

temp += ((((uint32_t)newval) << 16) >> depth);

*avg = ((temp >> 16) & 0x0000FFFF); // downconvert and writeback result

}

so, as a result, we maintain proper sensor values regularly updated and averaged. Now the interesting part, the Fuzzy Rules computation

Fuzzy Operators / Fuzzy Rules

Let’s recall that we have to compute fuzzy rules which look like this

if motion low and hr not locked and ambient light high

For doing this, we need to first define the Operators (Fuzzy operators). There are 2 main approaches, one based on MIN and MAX operators (recommended by Pr. Zadeh), the other based on probabilistic operations. Let’s stick with the latter.

#define f_one 1.0f #define f_zero 0.0f #define f_or(a,b) (((a)+(b))-((a)*(b))) // OR #define f_and(a,b) ((a)*(b)) // PROD #define f_not(a) (f_one - a)

So now, we can compute the Fuzzy Rules inferences. We compute the values for each of the propositions. For example, the “certainly off-arm” proposition was defined by 4 rules, so we infer the proposition value using RSS (Root of Sum of Squares). RSS was chosen after experimentation. One should refer to the various documents mentioned in the first article of this serie. For this particular proposition, the inference is as follows:

// Rules 1, 2, 3 , 3'==> certainly // Rule 1 // 1. if motion low and hr not locked and ambient light high == > certainly off - arm temp = f_and(MotionMFunc_LOW(owd_data.bmiAnyMo), OhrmMFunc_CFL_OFF_ARM(owd_data.hrLockVal)); temp = f_and(temp,LsMFunc_HIGH(owd_data.lsValue)); certainly = pow_s (temp, 2); // Rule 2 // 2. if motion high and hr not locked and ambient light high ==> certainly off-arm temp = f_and(MotionMFunc_HIGH(owd_data.bmiAnyMo), OhrmMFunc_CFL_OFF_ARM(owd_data.hrLockVal)); temp = f_and(temp,LsMFunc_HIGH(owd_data.lsValue)); certainly += pow_s(temp, 2); // Rule 3 // 2. if motion low and hr not locked and (ambient light low or ambient light moderate)==> certainly off-arm temp = f_and(MotionMFunc_LOW(owd_data.bmiAnyMo), OhrmMFunc_CFL_OFF_ARM(owd_data.hrLockVal)); temp = f_and(temp, f_or(LsMFunc_LOW(owd_data.lsValue), LsMFunc_MID(owd_data.lsValue))); certainly += pow_s(temp, 2); // Rule 3' // 2. if ambient light high ==> certainly off-arm temp = LsMFunc_HIGH(owd_data.lsValue); certainly += pow_s(temp, 2); certainly = root2_s(certainly);

One will notice the use of the operators previously defined and the various membership functions for each of the fuzzy variables subsets. Finally, we make use of optimised POW and ROOT functions, to minimize the runtime penalty (see my article on these optimised functions).

Similarly, the computation of all the other proposition values (probably, certainly not) is done.

Defuzzification

As an outcome, we have a numerical value for each proposition, which is stored in variables like “certainly” (see above figure), “CertainlyNot”, etc.

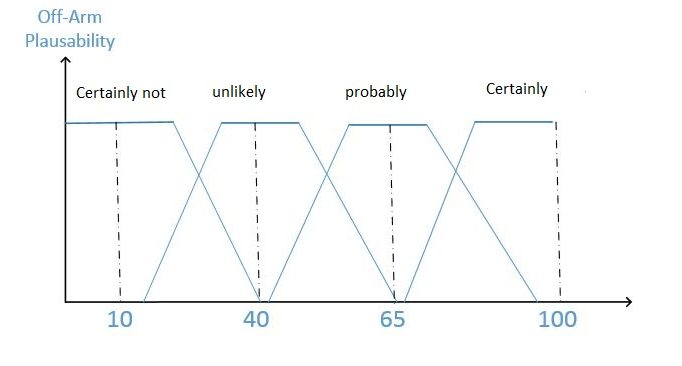

The only small problem is that, statements like “Certainly”, “Probably” have to also be quantified so we can calculate a “crisp” decision value out of our rules. Let us define arbitrarily the output variable “off-arm plausibility” as follows

That way we can compute the crisp decision (Defuzzification) via the COG method, which is commonly used. COG (Center Of Gravity) can be computed, in our case, with the very common formula

That way we can compute the crisp decision (Defuzzification) via the COG method, which is commonly used. COG (Center Of Gravity) can be computed, in our case, with the very common formula

// Decision (using COG) temp = (((10.0f * certainlyNot) + (40.0f * unlikely) + (65.0f * probably) + (90.0f * certainly)) / (certainlyNot + unlikely + probably + certainly));

It assumes that the COG for each of the subset is right at the middle.

temp variable then contains the degree of plausibility of the “off-arm” output variable. By using some carefully chosen decision threshold, one can derive a decision. For example

if (temp> DECISION_THR)

*decision = OFF_ARM_STATE; // mean of maxima for certainly

else

*decision = OWD_UNDEFINED_STATE;

This is a simplistic example, but it answers to the design objectives set at the beginning, which was very conservative.

Experimental results / improvements

After quite a lot of tests and parameters tuning, we could reach a good decision on “typical cases”, like “device laying on the table“, at close to 100% success rate.

For other cases, some false positive were recorded. Then, by adjusting the rules, this clearly could be improved. As our design goal was conservative (i.e. Better not do anything if not certain), this was OK and also suited to incremental improvements of the controller.

Finally, it seemed that the most difficult part lies with the Rules definition. When I first started, I very rapidly came up with as much as 11 Rules. It then appeared there were a lot of redundancies, and after experimentation, I dropped some of them. However, the set I came up with is clearly sub-optimal. Improvement in this area has clearly to use some more formal methods than simple gut feeling …